One valuable component of research includes data collection and the validity of your research information. Research is a serious initiative, think about what if your data, methodology or eventual outcome methods are not quite up to standard. Your sample sizes were not correct; your information was affected; you could disseminate the wrong information, thus affecting populations at large. We have an ethical responsibility to do the right thing for our perspective communities as well as our healthcare professionals. For those who choose to continue their studies at the doctoral level, research, it is methodology, its application to practice (for DNP candidates) and the generation of new knowledge (for Ph.D. candidates), will quickly discover the importance of mastering these skills or having access to available resources.

Chapter 8: Sampling

Let us explore the various types of sampling that will be involved in this module. Sampling is the process of selecting units (e.g., people, organizations) from a population of interest so that by studying the sample, we may somewhat generalize our results back to the population from which they were chosen. Please review chapter 8 in your Tappen class text.

Video: Sampling… What is sampling?

https://www.youtube.com/watch?v=Gs-gLeYuDZw

Article: Sampling in Research

http://www.indiana.edu/~educy520/sec5982/week_2/mugo02sampling.pdf

So how does one begin?

- Population identification

- Obtain a sampling frame

- Look at a sampling frame specify (this can be done randomly or non-randomly)

- Determine the sample size

Methods of sampling from a population

Simple random sampling. In this case, everyone is chosen entirely by chance, and each member of the population has an equal chance, or probability, of being selected. Please become familiar with the following concepts.

- Systematic sampling.

- Stratified sampling.

- Clustered sampling.

- Convenience sampling.

- Quota sampling.

- Judgment (or Purposive) Sampling.

- Snowball sampling.

Chapter 9: Reliability

Class, when it comes to reliability and validity, these concepts are associated with the highest quality of measurement in your research endeavors. Chapter 9 has an emphasis on reliability, although there is mention of validity, much of this content is associated with your reading assignment in Chapter 10. However, since there is an overlap in these concepts, you will hear mention regarding the topic. I hope not to confuse anyone.

Please note that reliability is one of the most important qualities of a research tool. Think of reliability as an instrument of measure that determines the degree of consistency with which it measures the attribute for what it is supposed to measure. If a measuring tool is accurate, it is said to be reliable.

Estimation of reliability:

- Stability – it is the extent to which the same results are obtained repeatedly during testing.

- Test/Re-test method (p. 145).

- Equivalence – shows the consistency of performance on different forms of the test; it is based on the correlation between performance on the different forms administered at the same time.

- Inter rater method – this is estimated by having two or more trained observers watching the same event simultaneously and independently, then recording the relevant variable.

- Intra rater method – scores are assessed by two tools by a single researcher, then the method is called the intra-rater method of calculating reliability.

- Internal consistency – this shows the consistency of performance on the different pasts of items of the test taken at ta single setting.

- Pilot study – it is the entire operation in a miniature version. It is a careful empirical checking of all phases of the study from the collection of data to their tabulation and analysis.

Summary Points:

- Psychological researchers do not simply assume that their measures work. Instead, they conduct research to show that they work. If they cannot show that they work, they stop using them. You will see this while exploring the methodology section of your research.

- There are two distinct criteria by which researchers evaluate their measures: reliability and validity. Reliability is consistency across time (test-retest reliability), across items (internal consistency), and across researchers (interrater reliability). Validity is the extent to which the scores represent the variable they are intended to.

- Validity is a judgment based on various types of evidence. The relevant evidence includes the measure’s reliability, whether it covers the construct of interest, and whether the scores it produces are correlated with other variables they are expected to be correlated with and not correlated with variables that are conceptually distinct.

- The reliability and validity of a measure are not established by any single study but by the pattern of results across multiple studies. The assessment of reliability and validity is an ongoing process.

Reference

Petty, R. E, Briñol, P., Loersch, C., & McCaslin, M. J. (2009). The need for cognition. In M. R. Leary & R. H. Hoyle (Eds.), Handbook of individual differences in social behaviour (pp. 318–329). New York, NY: Guilford Press

For additional clarification and supplemental resources, please access the following:

Video: Understanding Measurement Validity

https://www.youtube.com/watch?v=kkjjZtFV9ZE

Video: Reliability and Validity of Measurement

https://www.youtube.com/watch?v=VTHWQOuEfiM

Article: Understanding Reliability and Validity in Qualitative Research

https://nsuworks.nova.edu/tqr/vol8/iss4/6/

Dr. Davis

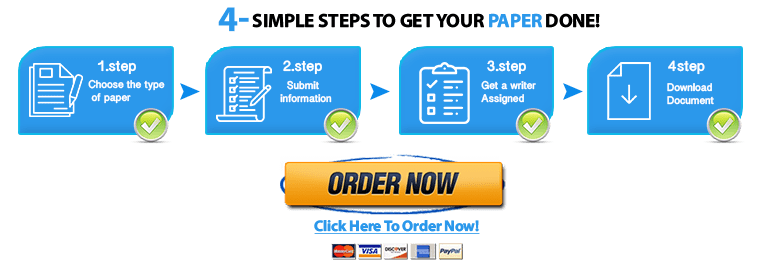

"Looking for a Similar Assignment? Order now and Get 10% Discount! Use Code "Newclient"